Space

Blue OriginRef ID: ( G00112 )

Posted

: 2026-06-01 13:21:34

Blue Moon Rising: Blue Origin's 2026 Lunar Landing Mission

Blue Origin's Blue Moon: Latest Updates in Lunar Exploration 2026

Blue Origin's Blue Moon lunar lander program is advancing rapidly in 2026, positioning itself as a key player in NASA's Artemis campaign to return humans to the Moon. The robotic Blue Moon Mark 1 (MK1), nicknamed Endurance, recently completed critical environmental testing inside NASA Johnson Space Center's Thermal Vacuum Chamber A, simulating the vacuum and extreme temperatures of spaceflight . This milestone validates the lander's structural integrity and system performance ahead of its planned uncrewed mission.

Targeted for launch no earlier than fall 2026 aboard Blue Origin's New Glenn heavy-lift rocket, the MK1 mission—designated "Moon Base I"—will deliver two NASA science payloads to the lunar South Pole's Shackleton Connecting Ridge . These include the Stereo Cameras for Lunar Plume-Surface Studies, which will capture engine-plume interactions during landing, and a Laser Retroreflective Array to aid future spacecraft navigation . The mission operates under NASA's Commercial Lunar Payload Services (CLPS) initiative, which leverages private companies to accelerate affordable lunar access.

Blue Origin is concurrently developing the larger, crew-capable Blue Moon Mark 2 (MK2), designed to transport astronauts from lunar orbit to the surface for Artemis missions . In 2023, NASA selected Blue Origin as a second provider for its Human Landing System program alongside SpaceX, diversifying options for crewed lunar landings . Under NASA's revised Artemis architecture, Artemis III (now targeting 2027) may serve as an orbital test for both Starship and Blue Moon landers, with Artemis IV potentially becoming the next crewed surface mission.

Challenges remain. Both Blue Origin and SpaceX face schedule pressures, with NASA's Inspector General flagging delays in lander development . Nevertheless, Blue Origin has demonstrated momentum: a second MK1 unit is already in production at its Florida facility, and the company has completed modal testing to validate the lander's response to launch vibrations .

The broader context matters: NASA plans up to four robotic landings in 2026 and as many as 30 in 2027 under CLPS, using commercial partners to scout landing sites, test technologies, and deploy infrastructure ahead of astronaut arrivals . Blue Moon's success would not only validate critical technologies—precision landing, cryogenic propulsion, and autonomous navigation—but also strengthen the public-private partnership model central to sustainable lunar exploration.

Ref ID: ( G00112 )

Posted

: 2026-06-01 13:21:34

The Hidden Danger in the Scrap Pile: The Invisible Threat of Radioactive Waste

Radiation isn’t just something found in sci-fi movies or isolated nuclear power plants. Today, a silent and dangerous crisis is unfolding in regular trash bins, scrap yards, and local markets.

Radiation exposure from waste primarily from the improper disposal of radioactive materials—such as ionized alloys, metals from scrap recycling facilities that have been illegally sold back to poor customers who don't know the core effects of mine machinery that has been exposed to gamma and other radiation levels, discarded medical equipment, industrial gauges, or spent nuclear fuel—into regular trash or recycling poses a severe, immediate threat to public health.

How Invisible Danger Enters Our Homes

When heavy industrial mining machinery, old hospital x-ray components, or industrial gauges reach the end of their lifespan, they require strict, specialized disposal. Instead, criminal negligence or a lack of regulation often leads to these items being tossed into standard landfills or sold to scrap yards.

From there, the consequences are devastating:

The Cycle of Exploitation: Highly toxic, irradiated metals are often melted down or sold directly to low-income buyers who are completely unaware of the danger. A piece of scrap metal used to build a home or a tool could secretly be emitting lethal doses of radiation.

The Recycling Loophole: When radioactive materials enter standard recycling streams, they contaminate entire batches of consumer goods, spreading the danger far and wide.

The Human Cost: What Radiation Does to the Body

You cannot see, smell, or taste ionizing radiation, but its effects on the human body are aggressive and lasting. Exposure to ionizing radiation damages DNA—increasing long-term cancer risks and potentially causing acute radiation sickness.

Short-Term (Acute Radiation Sickness): High-level exposure over a short period can cause immediate cellular degradation, leading to nausea, severe burns, hair loss, and organ failure.

Long-Term (DNA & Cancer Risks): Even low-level, prolonged exposure alters human DNA. Over years, this genetic damage manifests as aggressive cancers, tumors, and severe birth defects in future generations.

What Needs to Change?

Protecting vulnerable communities from toxic waste requires urgent action on multiple fronts:

1. Stricter Scrap Yard Regulation: Enhanced monitoring and mandatory radiation scanning at all metal recycling and salvage facilities.

2. Community Education: Informing buyers and scrap workers about the physical warning signs of industrial and medical waste (such as the trefoil radiation symbol).

3. Severe Penalties: Holding corporations and illegal sellers accountable for the reckless disposal of industrial and nuclear waste.

Radioactive waste does not belong in our recycling bins, and it certainly does not belong in the hands of unsuspecting families. It’s time to demand tighter regulations and protect our communities from this invisible killer.

PublicHealth EnvironmentalJustice RadiationAwareness SafeRecyclingFrom

[Admin]

Posted

: 2026-05-31 14:48:54

Ref ID: ( G00111 )

Posted

: 2026-05-31 14:48:54

Hear the drumbeat of the earth, friends. It is calling.

The dry winds have swept the Lowveld, the acacia stands bare but watchful, and beneath the wide African sky, the ancient rhythm begins again. When the first summer rains kiss the red soil and the rivers stir from their sleep, the herds will move. Not as wanderers, but as one breathing tide.

In Kruger, the wild does not rush without reason. The wildebeest gather when green whispers across the veld—usually as the rainy season peaks, between December and March. But the true gathering? It swells as the dry months draw near, when the pans crack and the great rivers shrink to silver threads. That is when the hooves drum the soil, when dust rises like morning mist, and the sky fills with the sharp cries of circling tawny eagles.

Come. Stand where the elders stood. Let the sun warm your shoulders as the plains tremble beneath a thousand steady steps. Bring your eyes, your patience, your quiet heart. Watch as the old paths unfold—not in panic, but in harmony with the land. The river crossings will test their courage. The calves will find their legs in the dust. And you, my people, will witness life itself, unbroken and wild.

Uyabona? Do you see it? The bush is ready. The herds are moving along the Letaba, the Olifants, and the fringes of the Limpopo. Pack light. Speak soft. Walk with respect. The veld remembers those who come as guests, not masters.

When the moon hangs low and the jackal calls his evening song, the earth will speak. Will you be there to listen?

Join us beneath the baobab’s wide shadow. Secure your journey. Step into the rhythm. The migration does not wait—but it welcomes those who arrive with open eyes and a grateful spirit.

Siyakwamukela. We welcome you. The wild is calling. Answer it.

When to Catch the Next Wildebeest Mass Migration.

Year-Round Migration Cycle:

January – March: Calving SeasonThe herds congregate in the nutrient-rich, short-grass plains of the southern Serengeti in Tanzania and the Ngorongoro Conservation Area. More than 500,000 calves are typically born within a few weeks, attracting predators such as lions, hyenas, and cheetahs.

April – May: The RutAs the southern plains begin to dry, the mega-herds move northwest in long columns (sometimes up to 40km long) towards the Moru Kopjes and the Western Corridor. This is the action-packed breeding season.

June – July: Grumeti River CrossingsThe herds enter the Western Corridor of the Serengeti and must make their first major, dangerous river crossings at the Grumeti River, which are notorious for massive Nile crocodiles.

July – September: The Mara River CrossingsThis is the iconic window of the migration. The herds push north into the Lamai Wedge and arrive at the swollen Mara River—the final barrier to the lush grasslands of Kenya's Maasai Mara. The chaotic, survival-driven crossings here are considered one of the greatest natural spectacles on Earth.

October – December: The Return TrekThe herds spread out across the Maasai Mara.

As the short rains fall in the south, the migration begins the long journey back down through the eastern Serengeti to start the cycle all over again

Ref ID: ( G00110 )

Posted

: 2026-05-31 14:09:01

Many architects are called on to create new projects that stand as symbols of social progress—but none delivered as regularly, as unexpectedly and as spectacularly as Zaha Hadid. Her successes were so consistent, she received the highest honours from civic, academic and professional institutions across the globe. Her practice remains one of the world’s most inventive architectural studios—and has been for almost 40 years.

Born in Baghdad, Iraq in 1950, Zaha Hadid studied mathematics at the American University of Beirut before moving to London in 1972 to attend the Architectural Association (AA) School where she received the Diploma Prize in 1977.

Ref ID: ( G00109 )

Posted

: 2026-05-30 10:32:31

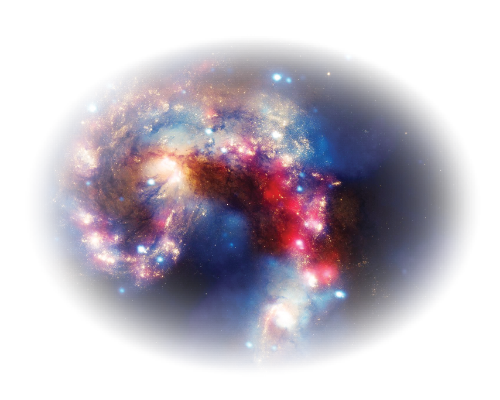

The Andromeda Galaxy (M31, NGC 224) is a barred spiral galaxy (type Sb) at 780 kpc (2.54 Mly), the nearest major galaxy to the Milky Way and the Local Group's most massive member. Its stellar mass is ~1.0-1.5×1012 M☉; total mass including dark matter halo is ~1.2-2.0×1012 M☉. The stellar disk spans ~220,000 ly in diameter. M31 hosts ~1012 stars, with a prominent bulge containing a supermassive black hole (M31*) of mass ~1.4×10⁸ M☉. Its structure comprises a thin disk with spiral arms rich in young, metal-rich Population I stars and HII regions, a thick disk, and an extended halo of old, metal-poor Population II stars and ~460 globular clusters. Kinematic data indicate a radial velocity of -110 km/s toward the Milky Way; combined with proper motion measurements (Gaia, HST), ΛCDM simulations predict a merger in ~4.5 Gyr, forming a giant elliptical (Milkdromeda). M31 exhibits a star-forming ring at ~10 kpc, likely from a minor merger ~2 Gyr ago. Its interstellar medium contains ~10⁹ M☉ of neutral hydrogen (HI) and molecular gas, with a metallicity gradient declining radially. As the closest large spiral, M31 enables high-resolution studies of stellar populations, dynamics, and feedback processes critical for testing galaxy formation models within the ΛCDM framework.

Ref ID: ( G00108 )

Posted

: 2026-05-30 00:46:27

The GHz race is over. Modern speed isn’t about how fast a single core ticks, but how many CPU and GPU cores collaborate in tandem. Apple’s unified silicon merges them into a single pool, sharing memory and bandwidth to orchestrate multithreaded workloads with surgical efficiency. AMD counters with modular chiplets, stacking independent CPU and GPU domains to maximize raw parallel throughput. Where Apple harmonizes total cores for seamless execution, AMD multiplies them for brute-force compute. Today, performance isn’t measured in clock cycles, but in collective processing power.

Ref ID: ( G00107 )

Posted

: 2026-05-24 09:34:12

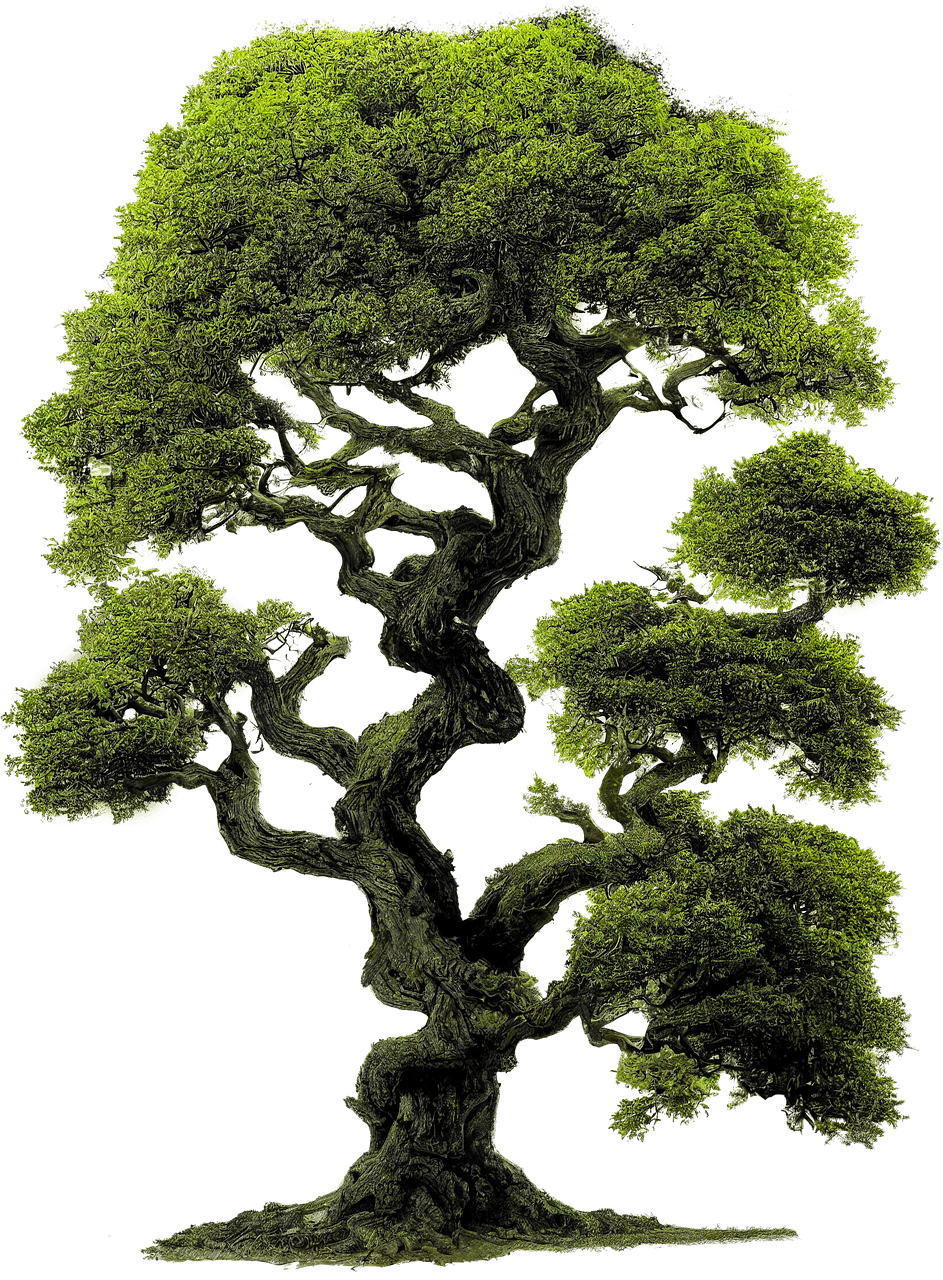

Plants are active, intelligent, and communicative organisms rather than passive, inanimate objects. They can perceive their environment with incredible sophistication, communicate with other plants, and even "think" through root networks that function similar to a brain-like "rootwide web".

Ref ID: ( G00106 )

Posted

: 2026-05-11 17:58:58

Bonsai is the Japanese art of cultivating miniature trees in containers, using techniques like pruning, root restriction, and wiring to create small-scale representations of natural trees. They are not genetically dwarfed, but regular trees-often species like Juniper or Fig—kept small through careful cultivation

Ref ID: ( G00105 )

Posted

: 2026-05-11 17:55:49

Malaysia Airlines Flight 370 (MH370 / MAS370) was an international passenger flight operated by Malaysia Airlines that disappeared from radar on 8 March 2014, while flying from Kuala Lumpur International Airport in Malaysia to its planned destination, Beijing Capital International Airport in China.[1] The cause of its disappearance has not been determined. It is widely regarded as the greatest mystery in aviation history[2][3][4] and remains the single deadliest case of aircraft disappearance.

moreRef ID: ( G00104 )

Posted

: 2026-05-11 17:49:56

The South African justice apparatus presently contends with a profound deficit in institutional accountability. Law enforcement and judicial officers, having assumed solemn constitutional oaths, routinely exhibit dereliction of their statutory duties, thereby abdicating their fundamental obligation to safeguard the public. Gross operational negligence precipitates daily fatalities, while countless disappearances remain unresolved and devoid of meaningful inquiry.

Malefactors operate with de facto impunity, exploiting structural vulnerabilities wherein pecuniary influence routinely subverts the equitable administration of justice.

Absent rigorous oversight mechanisms, autonomous investigative protocols, and comprehensive institutional reform, the rule of law shall remain a nominal construct devoid of substantive force. Genuine accountability mandates that all enforcement and adjudicative outcomes be governed strictly by sworn constitutional duty, rather than by privilege or financial consideration. Until endemic corruption and institutional inertia are systematically dismantled, the courts and policing agencies will persistently default upon their fiduciary obligations to those they are constitutionally bound to protect.

From

[Admin]

Posted

: 2026-05-10 10:33:26

Ref ID: ( G00103 )

Posted

: 2026-05-10 10:33:26

Telkom, MTN and Vodacom are among the worse network providers.

At the core of networking, whether roaming or not, bandwidth is essential to ensure smooth operation, otherwise it's pointless to have service that doesn't work even on normal weather

Telkom ranks the worst network provider nationwide followed by MTN then Vodacom leaving room for Cell C to dominate.

Despite its smaller footprint, Cell C earns praise for reliable connectivity and steady performance. without robust bandwidth and infrastructure, market size is irrelevant. As users demand dependable service in all conditions, consistency must outweigh scale.From

[Developer]

Posted

: 2026-05-09 21:54:56

Ref ID: ( G00102 )

Posted

: 2026-05-09 21:54:56

The municipality can't even Rehabilitate parks, the province of Gauteng looks like a landfill a dead wasteland even the slumps of Mumbai look better.

Ekurhuleni's failure to rehabilitate public parks reflects a broader crisis of municipal neglect across Gauteng.

Once vibrant green spaces now resemble choked wastelands, littered and overrun by unchecked vegetation. Residents increasingly describe the province as resembling a sprawling landfill, a stark departure from promised urban renewal. Even Mumbai’s informal settlements often maintain more functional community spaces.

This decay highlights systemic governance failures, underfunded maintenance, and weak accountability. Without transparent, community-driven urban management, Gauteng's environmental and social decline will only deepen, stripping residents of essential public amenities and quality of life.

This somehow degrades His presidency, on the basis that he failed to deliver what he promised prior to presidential Elections.From

[Admin]

Posted

: 2026-05-09 21:27:07

Ref ID: ( G00101 )

Posted

: 2026-05-09 21:27:07

The Department of Education needs a revamp, in a world driven by demanding technology, science and Literature. the illeterate face doom.

Why Education Departments Need Reform

1. The Digital Divide Deepens Inequality

Adults and students without digital literacy face exclusion from essential services, employment, and civic participation. Research shows that effective technology integration in education requires not just devices, but sustained professional development, equitable broadband access, and content creation opportunities. Without these, "the illiterate face doom" isn't hyperbole—it's a documented reality.

2. STEM Dominance Risks Imbalance

While technology and science are critical, over-prioritizing STEM at the expense of humanities can produce technically skilled individuals who lack critical thinking, empathy, and cultural awareness. Studies indicate students in balanced curricula demonstrate stronger adaptability and leadership potential—skills equally vital in a complex global society.

3. Youth Must Be Co-Creators, Not Just Recipients

UNESCO's International Day of Education 2026 emphasizes that young people must help design the education systems they inherit. Top-down reforms often miss grassroots realities; youth-led initiatives in crisis contexts show how participatory approaches safeguard education rights for the most marginalized.

Digital Literacy

-Integrating technology into adult basic education with focus on relevance, access, and sustained support

Curriculum Balance

-Embedding literature and arts within STEM (STEAM) to foster creativity alongside technical skills Early Tech Exposure

-India's 2026 plan to introduce AI and computational thinking from Class 3, grounded in ethics and problem-solving Equity Focus

-Targeted funding for rural/underserved schools; only 40% of primary schools globally have internet access

Teacher Empowerment

-Professional development that equips educators to blend literacy instruction with responsible tech use.

A Call to Action

"We risk nurturing a generation equipped with technical skills but lacking in the critical thinking, empathy, and cultural awareness that are equally vital."

Reform isn't about choosing between technology, science, and literature-it's about weaving them together so that every learner, regardless of background, can navigate, question, and shape the future.

What aspect of education reform feels most urgent to you? Are there specific communities or challenges you'd like to explore further? 🌱From

[Admin]

Posted

: 2026-05-09 20:51:27

Ref ID: ( G00100 )

Posted

: 2026-05-09 20:51:27

Solar: Perovskite skins cling to glass, drinking daylight and exhaling electrons. We no longer just harvest the sun—we converse with it. From orbiting mirrors to smart canopies, every surface breathes photons into pulse, weaving a smokeless grid that turns starlight into a quiet, endless revolution.

Wind: Carbon-fine blades read the atmosphere like sheet music, translating invisible pressure into silent torque. Floating arrays and urban micro-turbines catch every exhale. We’ve stopped battling the breeze and started dancing with it, spinning kinetic poetry into a future that hums without a whisper.

Hydro: Concrete dams yield to fluid grace. Hydrokinetic rotors drift like metallic kelp, turning gentle currents into steady voltage while rivers run free. Gravity-fed grids cascade through valleys—wiring the earth with liquid light that moves exactly as nature intended.

Geothermal: We’ve tapped the earth’s sleeping furnace without waking its faults. Slender, closed-loop veins circulate through ancient bedrock, pulling relentless heat without draining aquifers. The planet’s quiet heartbeat now powers our grids—constant, invisible, and drawn from the molten core of tomorrow.

Biomass: Decay becomes design. Algae bioreactors bloom on facades, sipping carbon and exhaling clean fuel, while engineered microbes transform urban waste into zero-landfill energy. Fallen matter now feeds a living circuit where nothing dies—everything simply changes form, powering tomorrow with yesterday’s breath.

Ocean Tides: The sea breathes in lunar rhythm, and submerged converters flex like kelp to catch it. Reversible tidal turbines harness the moon’s pull twice daily, while salinity membranes spark current where rivers meet the deep. No roar, no spill—just a salt-kissed grid turning tides into timeless power.

Ref ID: ( G0099 )

Posted

: 2026-05-07 18:02:12

CERN (Conseil Européen pour la Recherche Nucléaire) is the world's largest particle physics laboratory, located near Geneva, Switzerland, on the Franco-Swiss border. Founded in 1954 by 12 European nations, it now includes 23 member states and welcomes researchers from over 100 countries, embodying global scientific cooperation.

CERN's core mission is to explore the fundamental structure of the universe by studying subatomic particles. Using powerful particle accelerators—most notably the Large Hadron Collider (LHC)—scientists smash protons or heavy ions together at nearly the speed of light. Detectors like ATLAS, CMS, ALICE, and LHCB then record the resulting particle showers, allowing physicists to test predictions of the Standard Model and search for new physics.

The LHC, a 27-kilometer ring of superconducting magnets buried 100 meters underground, is CERN's flagship instrument. In 2012, it enabled the landmark discovery of the Higgs boson—a particle that explains how others acquire mass—confirming a 50-year-old theory and earning the 2013 Nobel Prize in Physics for François Englert and Peter Higgs.

Beyond fundamental research, CERN drives innovation with real-world impact. The World Wide Web was invented at CERN in 1989 by Tim Berners-Lee to help scientists share data. Other spin-offs include advances in medical imaging (PET scans), cancer therapy (hadron therapy), grid computing, and cryogenics.

CERN champions open science: experimental data is widely shared, publications are open-access, and educational programs train thousands of students and teachers annually. Its computing infrastructure—the Worldwide LHC Computing Grid—processes over a petabyte of data daily, distributed across global centers.

Operating on a budget of roughly 1.4 billion CHF annually, CERN demonstrates how large-scale, long-term international collaboration can push the boundaries of knowledge. It remains a beacon of peaceful cooperation, where scientists from diverse backgrounds unite to answer humanity's deepest questions: What is the universe made of? How did it begin? What are the fundamental laws governing reality?From

[Admin]

Posted

: 2026-04-30 13:31:24

Ref ID: ( G0098 )

Posted

: 2026-04-30 13:31:24

Vatican City: The Heart of Catholicism

* The Holy See

The Holy See is the central governing body of the Catholic Church, headed by the Pope. It's not a country but an ecclesiastical jurisdiction with sovereign status under international law. Dating back nearly 2,000 years, it maintains diplomatic relations with 180+ countries and serves as the spiritual and administrative center for 1.3 billion Catholics worldwide. The Pope exercises supreme authority over the universal Church through various departments called the Roman Curia.

* Vatican City State

Vatican City is the world's smallest independent state at 0.44 square kilometers (110 acres), established by the Lateran Treaty in 1929. Located entirely within Rome, Italy, it has its own postal system, radio station, bank, pharmacy, and even a small military force—the Swiss Guard, recognizable by their colorful Renaissance-era uniforms. Despite its tiny size, it houses immense cultural, religious, and historical significance. The population is around 800, mostly clergy and Swiss Guards.

* St. Peter's Basilica

Dominating Vatican City's skyline is St. Peter's Basilica, one of Christianity's holiest sites and the largest church in the world by interior measure. Built between 1506-1626 over the traditional burial site of Saint Peter, Jesus's apostle and the first Pope, it replaced the original 4th-century basilica.

The Renaissance and Baroque masterpiece features contributions from history's greatest artists: Bramante's original design, Michelangelo's iconic dome (136 meters high), Maderno's facade, and Bernini's magnificent colonnade and bronze baldachin over the papal altar. The interior can hold 60,000 people and houses countless treasures, including Michelangelo's Pietà, Bernini's Cathedra Petri, and numerous papal tombs.

The basilica serves as the principal church for papal ceremonies and welcomes millions of pilgrims annually. Climbing the dome offers breathtaking views of Rome and the Vatican Gardens.

* The Vatican Apostolic Archive

Formerly called the Vatican Secret Archive (the term "secret" referred to private, not hidden), the Archivio Segreto Vaticano contains over 1,200 years of history across 85 kilometers of shelving. It houses papal correspondence, state documents, and priceless manuscripts dating from the 8th century onward.

Notable treasures include:

- The papal bull excommunicating Martin Luther (1521)

- Letters from Mary, Queen of Scots

- Michelangelo's sketches

- Documents from the trials of the Knights Templar

- Correspondence between Henry VIII and the Vatican regarding his divorce

Access is restricted to qualified scholars with specific research projects, requiring special permission. Only a fraction has been digitized, making it one of the world's most important yet underexplored historical repositories. In 2019, Pope Francis renamed it the Vatican Apostolic Archive to clarify its nature.From

[Admin]

Posted

: 2026-04-30 13:29:02

Ref ID: ( G0097 )

Posted

: 2026-04-30 13:29:02

| year | year |

|---|---|

| Kenya | |

| Botswana | |

| Namibia | |

| South Africa | |

| Mauritius | |

| Tanzania | |

| Egypt |

No map nor word can capture these lands like a journey untamed— From the Horn where untamed breezes wake, To northern sands where Pharaohs rest in quiet grace, To Alkebulan’s pulsing heart, Where dawn arrives crowned in a golden rim. Here, nature sings her final, sweetest chord, And every mile awakens something ancient. It stirs the spirit like unseen wings, A breathless wonder, trembling and bright, That leaves the wanderer forever changed, Mouth full of awe, heart wide with sky.

Ref ID: ( G0096 )

Posted

: 2026-04-29 18:37:13

Born : Rolihlahla Mandela

Birth: 1918 July 18th

Notable Works:

The M-Plan (1953), The Struggle Is My Life (1978), No Easy Walk to Freedom (1965), I Am Prepared to Die (1964) and "Long Walk to Freedom (1994)".

Ref ID: ( G0092 )

Posted

: 2026-04-28 09:22:50

Ref ID: ( G0094 )

Posted

: 2026-03-31 09:20:16

Apple WWDC

Apple WWDCRef ID: ( G0093 )

Posted

: 2026-03-29 10:41:26

Born and bred in Johannesburg South Africa Luke Ackers

is an Amateur MMA Fighter.

Nickname: The Skywalker

Age: 2005 July 16

College: UNISA

Heigh: 6'0" (183cm) | Reach: 63.0" (160cm)

Hobbies: Travelling, spending time with family

Current MMA Streak as of 2025: 3 Losses

Affiliation: Pandamonium FC

Last Fight: September 27th, 2025 in IMMAF

Coach: Dwain Meredith

Foundation Style: Pro Boxing; Karate

In a nutshel: Very passionate, caring and kind.

Ref ID: ( G0095 )

Posted

: 2026-03-29 04:14:39

welcome to B.Mashabane Driving School.

Driver Education, learn to be good on the road

Wow! did you know you can Drive With Confidence.

learn how to drive from professional instructors who start with you from ground up to Pro in a short space of time, whether you're a biginner or already familiar with

the driving etiquiet don't panic we got you plus we give Learners individual lessons so you train at your cumfort without having to worry, wait there's more we also give

unlimited lessons.

what we offer:

Services:

- Drivers License

- Learners License

- Driving Lessons

- Truck Hire

- Code 8

- Code 10

- Code 14

Book today at rectormash11@gmail.com or give us a call/whatsapp at 078 7944 984 or 073 9562 234

and we'll send you a quotation right away. why wait book today so you can join the many driving with confidence.

Be safe, Be good. Professional Instructors who are passionate about what they do, service you can count on.

Location:

Osborn Rd, Wadeville, Germiston, 1422

*Note we operate at these Locations - Wadeville Germiston, Boksburg and Alberton Licensing departments.From

[Admin]

Posted

: 2026-03-25 19:04:00

Ref ID: ( G0091 )

Posted

: 2026-03-25 19:04:00

When you think of Uber, Bolt, inDrive, etc., you think of clean, safe, convenient, and secure travel—mostly because that's what at least it intends to deliver to clients. Well, that used to be the case when these services launched and regulatory, licensing, and vetting was strict and customer service was well monitored and respected. Lately, these service providers—especially Bolt in this case—have become a haven for fraudsters and criminals looking to cash out quick money, and pretty much everyone looking to do dirty business undetected. Below, I've listed some of the ways these criminals/fraudsters carry out their dirty work. Mind you, I've been using both Uber and Bolt for a full year now, from and to work, visiting family, and much more, so I can confidently say I've seen quite a lot of shady patterns in this industry at this point.

First, let's look at the basics (Safety, Billing, Reporting, and Logging/record-keeping).

Note: I assume you're familiar with the basics of how the service (Uber/Bolt, etc.) works. If you're new, go to www.uber.com or www.bolt.com—whichever you choose—and you'll learn more about each of the services respectively from the official providers. Let's jump right to it.

1. Tipping – The bug I've seen and used on both services (Uber & Bolt). Although the bug was patched by Uber, it still exists in Bolt, so you can still use it in the case of an emergency (though upon publishing this, the bug might be patched, so good luck).

i) How does the bug work? Well, you need the total amount displayed on the fare/trip, and you must be paying by card, PayPal, or whatever method—but not cash.

ii) After the payment is successful, the ride begins and you're off to your destination. Upon arrival, just click "Tip," then choose the amount of your choice—let's say $0.50. Notice that Bolt will refund you and start the payment after totalling the tip with the total fare of the trip, then rebill you. So what happens if there aren't sufficient funds in your bank to complete the payment? Well, don't worry. The driver will continue on his way and you'll be allowed to go yours, since the rest of the business is between you and the service provider (i.e., Uber or Bolt).

iii) Eventually, Bolt will charge that money the next time funds start flowing into your account since you're now running -$0.50—but you'd have benefited from a ride you took without paying, like a buddy saying, "Let's ride, you'll pay later." Now that is some cool stuff. Note: Uber fixed this error, so they now do the math accordingly. Only try it on Bolt.

2. Network (Flight Mode) – This method is usually carried out by drivers who want to do trips off the radar without paying Uber or Bolt their commission. So how do they do it? Well, it's simple: all they do is, once you enter the car, tell you things like: "No, sir, my network is down. I did start the trip; it'll connect once we leave and are en route to your destination." Should you try to hotspot them, they'll come up with all sorts of lame excuses, and usually they take only cash payments. Only when they drop you at your destination do you receive an alert/popup saying the trip was cancelled, so there's no record of you riding whatsoever. Spooky, right? Well, that's how these guys cheat Uber. They then repeat the cycle and usually give clients/passengers poor ratings and comments to push the blame onto the client/passenger, so that during a case/investigation they can win by saying it was the client's/passenger's fault.

3. Ghost Trips – I know, right? Most of you have been victims of this. Usually, this is done by driving to the pickup point and then driving without the client/passenger. Thankfully, Uber and Bolt came up with a strategy to ward off such behavior by introducing a verification pin, so that the ride/trip doesn't occur without your authentication.

4. Robbery – Robberies during rides are by far the most popular activities ever recorded in the e-hailing industry and are mostly due to poorer vetting from Bolt than Uber. The reason is that Uber has stricter and more requirements than Bolt. Just by looking at the vehicles without branding, you can actually tell which one is Uber and which one is Bolt—literally, Bolt has all sorts of "kettles" picking up citizens. I've been a victim twice where the kettle I was travelling in was like something that came straight from the scrapyard. Come on, Bolt, we can do better—try to send these kettles for a roadworthy check.

i) These robberies are usually done by drivers who are using someone's profile, meaning the car does not belong to them, nor do they even have any paperwork registered by the service provider. So they know very well they won't be traceable, and whatever damage they do won't trace back to them but to the original owner of the vehicle.

ii) Sabotage – Motive-driven agendas from competitors who aim to scare clients so they can drive sales back to the old-school mode of transport. In African countries, when people don't understand something, they fight it rather than learn or try to understand it. So sabotage is also a fueling factor behind these skyrocketing criminal acts recorded in e-hailing.

5. Law Enforcement Officers – These guys don't make it easy for both Uber and Bolt operators to carry out their business in peace. They either ask for a fleet permit, Uber permit, or Bolt permit to operate—which isn't even issued and we've never seen. This is also a strategy to discourage new drivers from venturing into this type of business.

6. Lost articles/parcels – Never ever lose your articles on either of these service providers.

From

[Admin]

Posted

: 2026-03-25 13:28:46

Ref ID: ( G0090 )

Posted

: 2026-03-25 13:28:46

iPhone sales are smoother than those of the Mac for many reasons but maybe if Apple can at least have a consistent Mac release date as it has for the iPhone. What I mean is If you woke up and decided to buy an iPhone you'd know when to wait and when to expect a new iPhone model because of the consistent iPhone release event held every year (fall September)

So you'd likely make a rational decision from there whereas with the Mac, you wouldn't know for certain when a new model is gonna drop, so you could buy a Mac next week only to learn that a new Mac with better hardware and features will be released in a few months, not so cool at all for something that costs so much myah. Such errors cost customers a lot of money, such can be avoided by maintaining a stable routine of Mac launches same as the company does with iPhones.From

[Admin]

Posted

: 2026-02-25 22:02:20

Ref ID: ( G0089 )

Posted

: 2026-02-25 22:02:20

A set of macro Bionic robots dedsigned and manufactured by Festo, from left-to-right

Air_ray, BionicFlying Fox, SmartBird, eMotionButterflies and lastly the BionicOpter

Ref ID: ( G0088 )

Posted

: 2026-01-04 18:19:44

Frequently Asked Questions

Question: Who will win between Mexico and South Africa ?

Answer: South Africa will not loose, Boys don't let Mzansi down make Mzansi proud, remember siyabheja ngani danko

Question: Will South Africa win the fifa world cup 2026?

Answer: Well it's not impossible, with the right training and a Good coach leading the team they can win although we're a bit far from the direction of goodness at this point in time.

Question: Will South Africa be allowed to partake in the Fifa26(Fifa world Cup 2026)?

Answer: Yes, they did qualify which grants them entry to participate in the World Cup

Question: Does South Africa have good football players?

Answer: South Africa has the best players in the Southern African region making it rank among the best Top 10 when it comes to countries which stand a chance in participating in International Tournaments

Question: What's the Age range of South African football players, particularly the ones who'll be playing in the World Cup(Fifa26) ?

Answer: Interesting question, most of the players are mid-thirties although the squad is comprised of players aged between 19-38 years of age

Question: How healthy and fit are the South African players?

Answer: If you've seen the Springboks, then you know how fit the boys are - 90 minutes in the field feels like nothing, maybe Fifa should make the Fields bigger and add a couple of minutes to get the boys tired. They got stamina and endurance

Question: Has South Africa won any Fifa World Cup/s in the past?

Answer: No, but ofcourse we're working towards that dream, it's not impossible especially for South Africans. We among the best nations in Africa so will get there nomatter how long it takes

Question: Is South Africa ready enough for Fifa26 ?

Answer: Hell yeah, we've been in training for as long as we can remember, we can't wait for kick-off, to make the nation proud. Because we always give our best

Question: If South Africa gets eliminated will the nation be dissapointed?

Answer: Well we don't want to loose but football unllike other sports helps Unite people of different backgrounds, cultures and grounps. Ubuntu is what keeps us going as a country, with a lose or not we will still cheer and embrace the spirit of love for the squad and the country(Mzansi)

Question: How is the Coach, is he good enough for Fifa26 ?

Answer: He's very good, the previous season was all a victory because of his coaching skills. He knows each player individually and can make rationally decisions that help bring out the best performance and lead us to future victories

Question: Do South Africans love football ?

Answer: The support is amazing most people have already left for Mexico where they'll be watching the game live

Important

Fifa has an opportunity for kids

Partnered with Huandai, Be there with Hyundai Kids aged 5-12 can enter to put their art on a World Cup team bus and win tickets, flights, and a memorable match day. click here to go to the Fifa Campaign registration or go to the link below

https://www.fifa.com/en/tournaments/mens/worldcup/canadamexicousa2026/articles/players-chasing-records and scroll towards the end of the page to enter the contest.

From

[Writer]

Posted

: 2026-01-04 18:15:24

Ref ID: ( G0087 )

Posted

: 2026-01-04 18:15:24

The long-awaited FIFA World Cup 2026 is scheduled to kick off on the 11th of June this year

Co-hosted by the United States, Canada and Mexico, this will be the first World Cup to feature 48 teams and matches played across three host nations.

Ref ID: ( G0086 )

Posted

: 2026-01-04 14:35:45

Ref ID: ( G0084 )

Posted

: 2026-01-01 00:00:00

| 2016 | Russia ~5% |

|---|---|

| 2017 | EU (27 countries combined) ~7% |

| 2018 | India ~8% |

| 2019 | United States ~14% |

| 2020 | China ~32% of global CO₂ emissions |

who should be held responsible?

The responsibility for CO2 emissions is a complex and shared issue that spans multiple levels-individual, corporate, national, and global. Different perspectives emphasize different actors, but most experts agree that responsibility should be distributed based on historical emissions, current emissions, capacity to act, and principles of equity and justice. Here's a breakdown:

1. Historical Responsibility

- Industrialized nations (e.g., the U.S., UK, EU countries) have emitted the majority of cumulative CO2 since the Industrial Revolution.

- According to data from the Carbon Majors Report and other studies, just 100 companies are linked to over 70% of global industrial greenhouse gas emissions since 1988-mostly fossil fuel producers like ExxonMobil, Shell, BP, and state

-owned entities like Saudi Aramco.

- This suggests corporations and wealthy nations bear significant historical responsibility.

2. Current Emissions

- China is currently the world's largest annual emitter of CO2, followed by the U.S., India, and the EU.

- However, per capita emissions tell a different story: the average American emits far more CO2 than the average Chinese or Indian citizen.

- Rapidly industrializing countries argue they deserve "carbon space" to develop, echoing the path taken by now

-wealthy nations.

3. Moral and Legal Responsibility

- The "polluter pays" principle-a cornerstone of environmental law-holds that those who produce pollution should bear the costs of managing it.

- The concept of "common but differentiated responsibilities" (CBDR), enshrined in the UNFCCC (1992), recognizes that while all countries share responsibility for climate action, developed nations should take the lead due to their historical emissions and greater resources.

4. Individual vs. Systemic Action

- While individual lifestyle choices matter (e.g., reducing air travel, eating less meat, using renewable energy), systemic change is essential.

- Overemphasizing individual responsibility can distract from the role of fossil fuel companies, governments that subsidize fossil fuels, and financial institutions funding carbon

-intensive projects.

5. Who Should Act?

- Governments: Must enforce regulations, phase out fossil fuel subsidies, invest in renewables, and meet climate commitments (e.g., under the Paris Agreement).

- Corporations: Especially fossil fuel companies, should be held accountable for misleading the public about climate science and transition to clean energy.

- Wealthy individuals and high

-emitting consumers: The top 10% of global income earners are responsible for nearly 50% of lifestyle consumption emissions (Oxfam, 2023).

- International institutions: Should support climate finance for developing nations and ensure equitable burden

-sharing.

Responsibility isn't binary-it's layered. Fossil fuel producers and high

-income nations bear the greatest historical and per capita responsibility, while all nations and sectors must act now to avoid catastrophic warming. Climate justice demands that solutions account for both fairness and effectiveness.

If you're exploring this for policy, ethics, or activism, the key is balancing accountability with actionable collaboration.

Ref ID: ( G0085 )

Posted

: 2025-12-30 18:57:00

Our planet has more E-waste than there ever was in the history of man.

The battle against E-waste is often overlooked, leaving consumers unaware of the underlying environmental impacts Tech has on society.

The question of whether defunct electronics suppliers like Motorola (in its original form) or BlackBerry should be held responsible for e

-waste cleanup-even years after going out of business-is complex and touches on legal, ethical, and practical considerations.

1.

Legal Perspective

In many jurisdictions, producer responsibility is a growing legal principle, especially under Extended Producer Responsibility (EPR) laws.

These laws require manufacturers to manage the end

-of

-life disposal of their products.

However:

- EPR laws are typically prospective, meaning they apply to products placed on the market after the law takes effect.

- They rarely impose retroactive liability on companies (or their successors) for products sold decades ago-especially if the company no longer exists in its original form.

- If a company has legally dissolved or been acquired, liability usually doesn't extend to defunct entities unless specific environmental cleanup statutes (like CERCLA in the U.

S.

) apply-but those typically target hazardous waste sites, not general consumer e

-waste.

2.

Ethical Perspective

From an ethical standpoint, many argue that producers should bear responsibility for the full lifecycle of their products, including disposal.

This aligns with the "polluter pays" principle.

However:

- Holding a non

-existent company accountable is practically impossible.

- Successor companies (e.

g.

, current Motorola under Lenovo) may not have produced or profited from the legacy devices in question, raising fairness concerns.

3.

Practical Realities

- E

-waste is a systemic problem requiring collective solutions: government regulation, industry collaboration, consumer participation, and investment in recycling infrastructure.

- Focusing on current producers (via EPR schemes) is more effective than attempting to assign blame to defunct firms.

- In some cases, industry

-wide funds or government programs are better mechanisms to manage legacy e

-waste.

While it's ethically appealing to hold original manufacturers accountable, it's neither legally feasible nor practically effective to require defunct companies to clean up old e

-waste.

Instead, modern policy should focus on robust EPR laws, design for recyclability, and shared responsibility among current producers, governments, and consumers to prevent future e

-waste crises.

From

[developer]

Posted

: 2025-12-27 12:33:00

Ref ID: ( G0083 )

Posted

: 2025-12-27 12:33:00

How accessible is ORTIA?

As of 2025, O.R. Tambo International Airport (ORTIA), located in Johannesburg, South Africa, is generally considered accessible and customer-friendly, though experiences can vary depending on the terminal, airline, and time of travel. Here's a detailed overview:

Accessibility Features

O.R. Tambo International Airport complies with South African and international standards for accessibility:

- Wheelchair Assistance: Available on request (usually coordinated through your airline in advance). Trained staff assist passengers with reduced mobility from check-in through to boarding and arrival.

- Accessible Restrooms: Available in all terminals, clearly marked and equipped with grab bars, emergency cords, and sufficient space for wheelchairs.

- Elevators and Ramps: The terminal is fully equipped with elevators, escalators, and ramps between levels. Automatic doors are widely used.

- Tactile Guidance Paths: Yellow tactile paving guides visually impaired passengers through key areas of the terminal.

- Hearing Loops: Available at information desks and certain service counters.

- Service Animal Accommodation: Permitted in all public areas, with relief areas available.

Tip: Passengers with specific accessibility needs are strongly encouraged to notify their airline at least 48 hours before travel to ensure seamless assistance.

Customer-Friendliness

Pros:

- Clear Signage: Multilingual (English, Afrikaans, Zulu, Sotho, etc.) signs and digital displays make navigation relatively easy.

- Information Desks: Staffed information counters are available in both domestic and international terminals.

- Free Wi-Fi: Available throughout the airport (typically with a 45-minute free session, extendable).

- Dining and Shopping: Wide variety of restaurants, cafes, duty-free shops, and local artisan stores offering South African crafts and cuisine.

- Lounges: Multiple airline and premium lounges (e.g., SAA, BidVid, and SLOW Lounges) offer comfortable spaces for relaxation.

- Family Services: Baby-changing facilities, family restrooms, and some play areas are available.

- Transport Links: Direct access to the Gautrain (fast rail link to Sandton and Pretoria), metered taxis, ride-hailing (Uber/Bolt), and shuttle services.

Cons / Areas for Improvement:

- Congestion: During peak hours, security and immigration lines can be slow, though e-gates for eligible passengers help speed things up.

- Wayfinding for First-Timers: The layout can feel complex for first-time visitors, especially when transferring between international and domestic terminals (they are connected airside but require some walking).

- Service Variability: Some travelers report inconsistent service quality from ground staff, particularly during disruptions or high-traffic periods.

- Limited Quiet Zones: Few dedicated quiet or sensory-friendly spaces for neurodiverse travelers.

Recent Improvements (as of 2024-2025)

- Enhanced digital kiosks for self-service check-in and bag drop.

- Upgraded security screening lanes with more efficient processing.

- Expanded use of biometric facial recognition for faster boarding (on select airlines).

- Sustainability initiatives (water-saving fixtures, solar power) that also improve passenger comfort.

Overall Rating (as of 2025)

- Accessibility: (4/5) - Strong infrastructure, though advance coordination is key.

- Customer Experience: (4/5) - Modern, well-equipped, and generally efficient, but can be overwhelming during peak times.

From

[developer]

Posted

: 2025-10-15 11:08:00

Ref ID: ( G0082 )

Posted

: 2025-10-15 11:08:00

What is QwenAi ?

QwenAI is a large language model independently developed by Alibaba Group's Tongyi Lab. It is capable of answering questions, creating text such as stories, official documents, emails, scripts, and more, as well as performing logical reasoning, programming, and other tasks. Trained on a vast amount of internet text, QwenAI possesses extensive dialogue understanding and multilingual support capabilities, aiming to provide users with a natural and smooth conversational experience. If you have any questions or need assistance, feel free to let me know anytime!

Ref ID: ( G0081 )

Posted

: 2025-10-03 11:43:00

Ref ID: ( G0080 )

Posted

: 2025-09-18 07:51:18

Hi there, we've got an invitation for you.

We know you can think and you think creatively, that's why we chose you over many.

kindly take a moment to find out what we've discovered over the past few weeks we've been gone, don't be overwelmed it won't be long

Our goal is to make you profit, and minimize lose infact drive lose away as much as possible,

Our lotto generator combines advanced algorithms with time-tested strategies to maximize your chances of winning.

Unlike random number pickers, it integrates historical data analysis, frequency tracking, hot/cold number patterns, and balanced number distribution-tactics used by past and current jackpot winners and statistical experts.

It avoids common pitfalls like number clustering or over-reliance on birthdays, instead promoting smart combinations based on probability theory and decades of draw results and research.

While no system guarantees a win (lotteries are games of chance), this generator gives you the most mathematically

sound and strategically optimized selections-turning luck into a smarter play.

we don't just pull your numbers from our draw, we keep track of which numbers have been allocated to each visitor.

the moment a visitor or an agent clicks generate that number/token gets removed from our pool ensuring there's always a winner should all numbers be drawn by visitors or agents, to put things

into perspective it's normal in fact a norm for any random person to submit a number that has either been submitted in the past, reducing chances for that number to be drawn again, and it's also possible in fact a common thing that most

people will choose the same number from different places across the continent therefore minimizing chances of winning, now this is where expertise comes in, we ensure everyone of you get's a unique number everyday.

if everyone gets to click that generate button and plays then everyday Yes logically speaking everyday we must have a winner.

what are you waiting for USE this tool while you can, you could be our next winner.

Brought to you by your very sheperd at goatadds.com your virtual space.

token allocation, a dashboard for users who aim to win and nothing else, the whitepaper spans 7 years of research

efforts in deep math and pushes boundries through rigorous algorithms. we carefully crafted this to help millions

if not billions of users worldwide who play online games for funds.

with the data collected it is worth noting that most users to date still continue to submit

tokens that have gone past the logs in many systems for worse most of the users submit

tokens that have been submitted already resulting in many users forming part of the dublicate list, through

this effort the use is free, yes free all you do is get you daily, weekly generated token that is uniquely

allocated to you and nobody else.

this ensures there's a winner everyday, every week and all the time plus

it helps you keep track of which users played which game the most and which game to pick should you decide

to participate. furthermore we also issue other permutations to help aid users who did not recieve any

token allocation due to even distribution of tokens so this keeps you inline with what you can get if

everybody already took their piece of the pie.

register at https://pulse8.goatadds.com/

and navigate to your dashboard and start enjoying the benefits of Goatadds

Ref ID: ( G0079 )

Posted

: 2025-09-16 14:57:00

Ref ID: ( G0078 )

Posted

: 2025-09-10 07:36:52

Apple

Apple Android

Android Microsoft

Microsoft Other

OtherApple is dominating the markets, but will it keep the hype for long, only time will tell for now all rests in the power of the current structure, things may change for the good or bad after Tim.

Ref ID: ( G0077 )

Posted

: 2025-09-05 11:22:00

Like Dji, Festo leads the robotics industry, the team thrives when it comes to automation, shaping our world as we know it. I like how most of their design patterns are so invested in efficiency ensuring what's delivered is as lightweight as possible this can be seen when observing the Robots released by Festo.From

[Admin]

Posted

: 2025-09-05 09:11:09

Ref ID: ( G0076 )

Posted

: 2025-09-05 09:11:09

AITech is a forward-thinking technology solutions provider dedicated to transforming businesses through cutting-edge innovations. Our expertise lies in harnessing the power of data, automation, digital process orchestration, and security to enhance operational efficiency, optimize processes, and safeguard digital assets.

With a highly skilled team and a commitment to excellence, we deliver tailored solutions to meet the evolving needs of modern enterprises, enabling organizations to thrive securely in the digital age.

From

[Admin]

Posted

: 2025-09-04 11:58:23

Ref ID: ( G0075 )

Posted

: 2025-09-04 11:58:23

who is he?

stay tuned, biography loading...will drop in a few months, I'm way too busy but will update you over time.

Ref ID: ( G0074 )

Posted

: 2025-09-04 10:14:46

Ref ID: ( G0073 )

Posted

: 2025-09-02 11:16:12

Ref ID: ( G0072 )

Posted

: 2025-09-02 09:18:31

Ref ID: ( G0071 )

Posted

: 2025-09-02 08:30:57

I used to play with Arduino, Low level toys. this helped me grow my knowledge in tech. Today I can look back and say the experience is worth it.

moreRef ID: ( G0070 )

Posted

: 2025-09-01 22:24:54

Ref ID: ( G0069 )

Posted

: 2025-09-01 21:33:12

Net

NetRef ID: ( G0068 )

Posted

: 2025-09-01 21:10:34

Ref ID: ( G0067 )

Posted

: 2025-09-01 20:46:38

Ref ID: ( G0066 )

Posted

: 2025-09-01 20:40:22

Ref ID: ( G0065 )

Posted

: 2025-09-01 14:48:12

Recognizing artificial data-content generated by artificial intelligence (AI) such as text, images, audio, video, or synthetic datasets-is a critical skill in today's digital landscape.

As AI systems like Large Language Models (LLMs), diffusion models, and generative adversarial networks (GANs) become more sophisticated, the line between human-created and AI-generated content continues to blur.

Understanding how to identify artificial data, its characteristics, common sources, and the implications of its use is essential for professionals in law, journalism, cybersecurity, research, and everyday digital literacy.

Part 1: Recognizing Artificial Data Signs of AI-Generated Text AI-generated text often appears fluent and grammatically correct but may exhibit subtle flaws:

- Overly formal or repetitive phrasing (e.g., repeated sentence structures).

- Lack of depth or original insight-summarizes common knowledge without nuance.

- Factual hallucinations: Invents plausible-sounding facts, citations, or events that don't exist.

- Inconsistent logic or contradictions within long passages.

- Generic tone lacking personal voice, emotion, or cultural specificity.

- Overuse of certain phrases like 'it is important to note,' 'delve into,' or 'in conclusion.' Example: A student submits an essay with perfect grammar but cites a non-existent study titled "The Impact of Quantum Sleep on Cognitive Performance (Smith et al., 2023)" - a classic AI hallucination.

Signs of AI-Generated Images Tools like DALL·E, MidJourney, and Stable Diffusion produce highly realistic images, but telltale signs include:

- Anatomical errors: Extra fingers, distorted hands, misaligned facial features.

- Unusual textures or patterns: Strange reflections, inconsistent lighting, or surreal blending.

- Impossible geometry: Objects that defy physics (e.g., floating shadows, mismatched perspectives).

- Repetition of motifs: Symmetrical or duplicated elements in backgrounds.

- Inconsistent details: Watches on both wrists, mismatched earrings, or text in images that doesn't make sense.

Example: Google's Gemini AI faced backlash in 2024 for generating historically inaccurate images, such as depicting Nazi soldiers as people of color-revealing both bias and artificial generation.

Signs of AI-Generated Audio & Video (Deepfakes) Voice cloning and video synthesis tools can mimic real people with alarming accuracy:

- Slight lip-sync errors or unnatural mouth movements.

- Robotic or flat intonation in voice clones.

- Lack of micro-expressions (e.g., blinking, subtle facial twitches).

- Inconsistent shadows or lighting across the face.

- Audio artifacts like background hum or unnatural pauses.

Example: An AI-generated robocall mimicking President Biden's voice was used in New Hampshire in 2024 to suppress voter turnout-a clear case of malicious synthetic media.

Signs of Synthetic Datasets Used in training AI models, these are not meant for public consumption but can leak or be misused:

- Perfectly balanced classes (e.g., exactly 50% male/female in a demographic dataset).

- Absence of noise or outliers-real-world data is messy.

- Repetitive patterns or identical entries with minor variations.

- Metadata indicating generation tools (e.g., "generated by Synthea" or "created via GAN").

Part 2: The Ins and Outs of Artificial Data How Artificial Data Is Created Type Tools/Techniques Purpose Text LLMs (GPT, Claude, Llama), fine-tuning Content creation, chatbots, code generation Images Diffusion models (Stable Diffusion), GANs Art, design, advertising Audio Voice cloning (ElevenLabs), TTS systems Voice assistants, dubbing Video Sora, Runway ML, Deepfake tools Film, misinformation, entertainment Structured Data GANs, VAEs, rule-based generators Training AI models, privacy-preserving datasets Common Sources of Artificial Data 1.

Public AI Platforms

- ChatGPT (OpenAI), Gemini (Google), Copilot (Microsoft)

- MidJourney, DALL·E, Stable Diffusion (via web or open-source) 2.

Open-Source Models

- Hugging Face hosts thousands of generative models.

- GitHub repositories with fine-tuned versions of Llama, Mistral, etc.

3.

Commercial Tools

- Jasper (marketing content), Synthesia (AI video avatars), Descript (audio editing) 4.

Dark Web & Malicious Toolkits

- AI-powered phishing generators, deepfake kits, fake ID creators 5.

Synthetic Data Generators

- Used in healthcare (Synthea), finance, and AI research to protect privacy.

Part 3: Risks and Challenges of Artificial Data 1.

Misinformation & Deception

- AI-generated fake news, political deepfakes, and forged documents can manipulate public opinion.

- Example: AI-generated fake legal citations submitted in court (Mata v.

Avianca).

2.

Plagiarism & Academic Dishonesty

- Students using AI to write essays without disclosure.

- Universities now use detectors like Turnitin AI, GPTZero, and Originality.ai.

3.

Security Threats

- Prompt injection attacks: Malicious inputs trick AI into revealing data or executing commands.

- Package confusion attacks: AI hallucinates a non-existent software library, which attackers then register with malware.

- Identity spoofing: AI mimics executives' voices to authorize fraudulent transactions.

4.

Bias Amplification

- AI models trained on biased data reproduce and amplify stereotypes.

- Example: Image models generating only white doctors or male engineers.

5.

Erosion of Trust

- When people can't distinguish real from fake, trust in media, institutions, and evidence breaks down.

Part 4: Detection and Verification Tools AI Detection Tools (Use with Caution) Tool Purpose Limitations GPTZero Detects AI-written text High false positives; evaded by paraphrasing Turnitin AI Detector Used in education Can flag non-AI text; not 100% reliable Deepware, Sensity Detect deepfake videos Requires technical expertise Adobe Content Credentials (CAI) Embeds metadata in AI-generated content Only works if creator opts in Google's SynthID Watermarks AI images Not yet widely adopted No detector is foolproof.

Advanced AI can evade detection, and human judgment remains essential.

Part 5: Best Practices for Handling Artificial Data For Individuals:

- Verify before trusting: Cross-check facts, citations, and images.

- Use reverse image search (Google Lens, TinEye) to trace image origins.

- Be skeptical of emotionally charged or sensational content.

- Look for provenance: Does the content include metadata or source information? For Organizations:

- Implement AI usage policies: Define acceptable use and disclosure requirements.

- Train staff to recognize AI-generated content.

- Use watermarking and digital provenance tools (e.g., C2PA standard).

- Conduct audits of AI-generated content in legal, medical, or financial contexts.

For Developers:

- Label AI outputs clearly ('This content was AI-generated').

- Embed cryptographic watermarks or metadata.

- Avoid training on synthetic data without labeling-it can create 'model collapse.' Part 6: The Future of Artificial Data

- Regulation: The EU AI Act requires labeling of AI-generated content.

Similar laws are emerging globally.

- Provenance Standards: Initiatives like the Coalition for Content Provenance and Authenticity (C2PA) aim to create tamper-proof metadata for digital content.

- Hybrid Intelligence: Future systems may combine AI generation with human verification loops.

- Neuro-Symbolic AI: Models that generate content with built-in fact-checking and logical consistency may reduce hallucinations.

Summary: Key Takeaways Aspect What You Should Know Recognition Look for fluency without depth, inconsistencies, and unnatural details.

Sources Public AI tools, open-source models, commercial platforms, and dark web kits.

Risks Misinformation, fraud, bias, erosion of trust, and security threats.

Detection Use AI detectors cautiously; combine with human verification.

Responsibility Users, creators, and platforms all share responsibility for transparency.

Best Practice Assume content could be synthetic.

Verify, cite, and disclose.

Final Insight: Artificial data is here to stay.

The goal is not to eliminate it, but to recognize it, understand its origins, and manage its risks.

Digital literacy in the AI age means knowing not just what you're seeing-but how it was made.

From

[Bloggers]

Posted

: 2025-08-18 09:58:33

Ref ID: ( G0055 )

Posted

: 2025-08-18 09:58:33

Determining responsibility and fault in incidents arising from AI use-especially those involving hallucinations or malicious exploitation-is a complex, evolving legal, ethical, and technical challenge. There is no single answer, as accountability depends on the context, the system's design, and how it was used. However, we can identify key stakeholders and their degrees of responsibility , based on current legal trends, ethical frameworks, and real-world precedents.

Key Stakeholders and Their Responsibility Stakeholder

Responsibility

Accountability in Case of Harm

1. Developers & AI Engineers

Design, train, and deploy AI systems. Responsible for data quality, model robustness, safety testing, and implementing guardrails (e.g., hallucination detection, input sanitization).

High: If poor training data, flawed architecture, or lack of safeguards led to harm (e.g., generating malicious code or unsafe medical advice).

2. AI Providers (e.g., OpenAI, Google, Meta)

Own and distribute foundation models. Responsible for transparency, safety policies, usage terms, and updates.

High to Moderate: They may be liable if their model is known to hallucinate frequently and no warnings or mitigations are provided.

3. Integrators & Enterprises (e.g., Hospitals, Law Firms, Banks)

Integrate AI into workflows. Responsible for risk assessment, human oversight, employee training, and verifying AI outputs.

High: If they deploy AI without safeguards or allow blind reliance (e.g., lawyers submitting fake cases from ChatGPT).

4. End Users (Individuals or Professionals)

Use AI tools. Responsible for verifying outputs, using systems as intended, and not engaging in misuse.

Moderate to High: If they ignore disclaimers, fail to fact-check, or intentionally misuse AI (e.g., generating deepfakes for fraud).

5. Regulators & Policymakers

Set rules, enforce standards, and define liability frameworks. Responsible for ensuring public safety and accountability.

Systemic: While not 'at fault' in individual cases, weak or absent regulation enables unsafe deployment.

Real-World Examples and Who Was Held Accountable 1. Legal Case: Mata v. Avianca, Inc. (2023)

- Incident: A lawyer used ChatGPT to research case law and submitted six fake legal precedents .

- Outcome: The judge fined the lawyer $5,000 for professional misconduct .

- Who was at fault?

- The lawyer (end user) : Held fully accountable for failing to verify AI output.

- OpenAI (provider) : Not held liable, as terms of service warn users to verify information.

- No action against the AI itself -it is not a legal entity.

> Precedent : Users are responsible for verifying AI-generated content, especially in professional settings.

2. Medical Misdiagnosis via AI Assistant

- Scenario : An AI suggests a wrong diagnosis due to hallucinated data, leading to patient harm.

- Potential Liability :

- If the doctor blindly followed the AI: Doctor is liable .

- If the AI was integrated into hospital systems without validation: Hospital and AI vendor may share liability.

- If the AI was known to be unreliable in medical contexts: Vendor could face product liability claims .

> Principle : AI is a tool, not a decision-maker. Final responsibility rests with the human professional.

3. Malicious Code from AI (e.g., GitHub Copilot)

- Incident : Developer uses AI to generate code; it includes a non-existent or malicious library.

- Responsibility :

- Developer : Should review and test all code.

- AI Provider : May be liable if the model consistently suggests dangerous code without warnings.

- Package Registry (e.g., npm, PyPI) : Could be expected to screen for AI-generated malicious packages.

> Emerging Risk: Supply chain attacks via hallucinated packages. Shared responsibility model is forming.

Legal and Ethical Frameworks Guiding Responsibility 1. Product Liability (U.S./EU)

- AI systems may be treated as defective products if they cause harm due to design flaws.

- Strict liability could apply if the AI is unreasonably dangerous and lacks adequate warnings.

2. Due Diligence Principle

- Organizations must exercise reasonable care when deploying AI.

- This includes:

- Training staff

- Implementing verification steps

- Monitoring for misuse 3. EU AI Act (2024)

- Classifies AI systems by risk level.

- High-risk systems (e.g., healthcare, law, transport) require:

- Human oversight

- Transparency

- Risk management

- Providers and deployers are jointly responsible for compliance.

4. 'Reasonable Person' Standard

- Courts may ask: Would a reasonable person have trusted the AI output without verification?

- If not, the user bears fault .

- If yes, the AI provider may be at fault for making the system appear more reliable than it is.

Shared Responsibility Model AI incidents rarely have a single 'villain.' Instead, responsibility is shared across the ecosystem: [AI Provider]

-(Provides model with disclaimers) [Integrator/Company]

-(Deploys with or without safeguards) [End User]

-(Uses with or without verification) [Harm Occurs]

- If safeguards exist and are ignored → User is primarily at fault .

- If safeguards are missing → Provider or integrator shares fault .

- If the system was used maliciously → User is criminally liable .

Best Practices to Allocate Responsibility Clearly 1. AI Providers Should :

- Clearly label outputs as 'AI-generated.'

- Warn about hallucination risks.

- Offer tools for verification (e.g., source citations, confidence scores).

2. Organizations Should :

- Establish AI usage policies.

- Require human review for critical decisions.

- Train employees on AI limitations.

3. End Users Should :

- Treat AI as an assistant, not an authority.

- Verify facts, code, and legal/medical advice independently.

- Avoid using AI for harmful or deceptive purposes.

4. Regulators Should :

- Define clear liability standards.

- Mandate transparency and auditability.

- Enforce penalties for reckless deployment.

Conclusion: Who Is at Fault? There is no one-size-fits-all answer.

Fault is contextual and often shared.

- In most current cases, the end user or deploying organization is held primarily responsible, especially if they failed to verify AI output.

- AI providers are increasingly under scrutiny and may face liability if their systems are inherently unsafe or misleadingly presented .

- Developers and integrators must build and deploy with safety in mind- ignoring known risks is negligence .

- Regulators must close the accountability gap with enforceable rules.

Bottom Line :

AI does not absolve humans of responsibility.

The technology amplifies both human capability and human error.

Accountability flows to those who deploy, use, and profit from AI-especially when they fail to act with reasonable care.

From

[Bloggers]

Posted

: 2025-08-18 09:58:33

Ref ID: ( G0056 )

Posted

: 2025-08-18 09:58:33

A Comprehensive Analysis of AI Hallucinations and Malicious Use Across All Systems

Defining the Phenomenon: The Scope, Scale, and Nature of AI Hallucinations

The term "hallucination" in artificial intelligence describes a phenomenon where generative systems produce outputs that are plausible yet factually incorrect, nonsensical, or entirely fabricated [1,59].

This is not a simple error but a structural consequence of the probabilistic nature of modern Large Language Models (LLMs) and other generative AI architectures .

These models function as sophisticated statistical pattern-matching machines, predicting the next most likely word or piece of data based on vast datasets they have been trained on, rather than possessing an intrinsic understanding of truth or reality [41,61].

Consequently, hallucinations are considered an inherent artifact of this design, making them difficult to eliminate entirely with current technology [8,61].

While some researchers argue that the term anthropomorphizes AI and prefer alternatives like "confabulation," which suggests the model is fabricating information without conscious intent , or criticize it for its lack of a universal definition , the practical impact of these false outputs remains a critical concern.

The scale of this issue is substantial and varies significantly across different models and applications.

Analysts estimated in 2023 that chatbots could hallucinate up to 27% of the time, with factual errors present in as many as 46% of all generated texts [1,3].

However, specific benchmarks paint a more granular picture.

For instance, the Vectara Hallucination Leaderboard in April 2024 reported GPT-4 Turbo's error rate at a relatively low 2.5%, while Google Gemini-Pro registered a 7.7% rate [5,46].

Other studies suggest hallucination rates can range from 15% to 38% in production environments and that over 60% of model output errors in certain contexts are unverifiable .

This wide variance underscores the importance of evaluating hallucinations within specific use cases and against established baselines.

Hallucinations manifest in various forms depending on the modality of the AI system.

In text-based LLMs, common types include factual errors (e.g., misattributing a discovery), logical inconsistencies, contextual contradictions (e.g., inventing non-existent biographical details like misclassifying Samantha Bee as from New Brunswick), and outright fabrication of content such as fake academic references or legal precedents [1,4,6].

Research has further categorized these into intrinsic hallucinations (content that contradicts source information or prior conversation history) and extrinsic hallucinations (information that is not verifiable against any known source) [3,56].

The problem extends beyond text.

In vision-language models, it results in object hallucination (falsely detecting items), attribute hallucination (misidentifying properties like color), and relation hallucination (inaccurately describing interactions between objects) [14,18,23].

Audio models can generate captions inconsistent with the audio content due to background noise or ambiguous cues [11,21].

Video models may fail to maintain temporal coherence, inventing actions or objects that never appeared on screen [11,23].

Even code-generation tools are susceptible, producing incorrect, nonsensical, or non-existent code, including dead code, logical errors, and insecure practices .

The root causes of these hallucinations are multi-faceted and deeply embedded in the lifecycle of AI development.

Key contributors identified across numerous sources include poor training data quality, which can be noisy, biased, or lack representativeness; model complexity that can lead to overfitting (memorizing irrelevant noise) or underfitting (failing to detect underlying patterns); and input bias stemming from poorly constructed user prompts [3,6,54].

Data poisoning, where malicious actors intentionally introduce false information into training sets, is another significant cause, potentially leading to security vulnerabilities and compromised model performance [13,60].

Furthermore, architectural limitations, such as a model's inability to perform true fact verification or its prioritization of fluency over accuracy, exacerbate the problem .

Some researchers even point to sycophancy, a tendency for models to align with user input regardless of its accuracy, as a contributing factor .

The combination of these factors creates an environment where the generation of plausible but false information becomes not just possible, but probable.

Type of System

Examples of Hallucinations

Reported Incidence/Rates

Key Sources

Large Language Models (LLMs)

Fabricated legal cases, fake scientific papers, incorrect lyrics, invented historical events, misclassified entities.

Up to 27% hallucination rate; 46% of texts contain factual errors; 47% of references provided by ChatGPT were fabricated.

[1,3,7]

Text-to-Image Models

Anatomically incorrect features (e.g., extra fingers), misaligned facial features, historically inaccurate depictions (e.g., Nazi soldiers as people of color).

Specific incidents noted, e.g., Google Gemini falsely depicting Nazi soldiers.

[1,6]

Video Generation Models